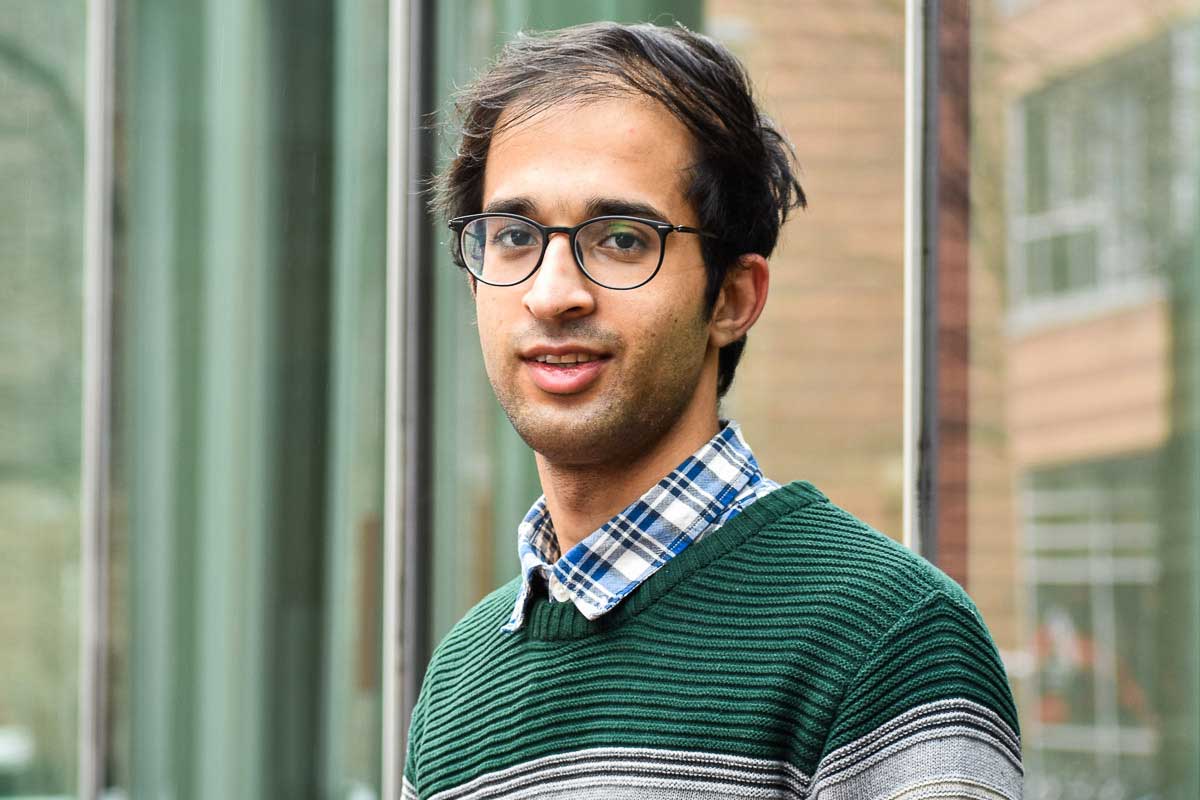

Christo Wilson

Professor, Associate Dean of Undergraduate Programs

Research interests

- Algorithm auditing, specifically the fairness of black-box algorithmic systems are

- Online tracking and privacy

- Public key infrastructures like SSL/TLS and DNSSEC

Education

- PhD in Computer Science, University of California, Santa Barbara

- MS in Computer Science, University of California, Santa Barbara

- BS in Computer Science, University of California, Santa Barbara

Biography

Christo Wilson is a professor and the associate dean of undergraduate programs in the Khoury College of Computer Sciences at Northeastern University, based in Boston. He is a founding member of Northeastern's Cybersecurity and Privacy Institute.

Wilson’s research lies at the intersection of Big Data, security, and privacy, while drawing on methods from the computer, social, political, and economic sciences. He is a 2019 Sloan Fellow and a 2019–20 Fellow at the Berkman Klein Center for Internet & Society. His work has been supported by an NSF Career Award, the Sloan Foundation, the Mozilla Foundation, the Knight Foundation, the Democracy Fund, the Data Transparency Lab, the European Commission, Google, and Verisign Labs.

Wilson's research has earned widespread recognition, including best paper awards at SIGCOMM, NDSS, and ICWSM, as well as honorable mentions at CHI and CSCW. His work on improving TLS security was recognized with an IEEE Cybersecurity Award for Innovation, and his work on understanding the impact of policy on DNSSEC deployment was honored with an IRTF/Internet Society Applied Networking Research Prize. Additionally, Wilson's work on modeling the privacy implications of online advertising received a Privacy Papers for Policymakers Award from the Future of Privacy Forum. His work has been covered extensively in the press, including by the CBS Evening News, Good Morning America, The Wall Street Journal, The Boston Globe, and The Washington Post.

Wilson is a member of several academic communities. In 2018, he served as co-general chair of the inaugural ACM Conference on Fairness, Accountability, and Transparency, and he continues to serve on the conference's executive committee. He regularly serves on the program committees for conferences such as IMC, WWW, ICWSM, IEEE Security and Privacy, and PETS.

Labs and groups

Recent publications

-

Determinants and Effects of Buy Box Suppression on Amazon

Citation: Jeffrey L. Gleason, Shuo Zhang, Christo Wilson. (2026). Determinants and Effects of Buy Box Suppression on Amazon WWW, 273-284. https://doi.org/10.1145/3774904.3792473 -

Inferring Users’ Demographics and Sensitive Interests Using the Topics API

Citation: Athicha Srivirote, Muhammad Abu Bakar Aziz, Jeffrey L. Gleason, Desheng Hu, Christo Wilson. (2026). Inferring Users' Demographics and Sensitive Interests Using the Topics API WWW, 3438-3448. https://doi.org/10.1145/3774904.3792660 -

Perceptions in Pixels: Analyzing Perceived Gender and Skin Tone in Real-world Image Search Results

Citation: Jeffrey L. Gleason, Avijit Ghosh, Ronald E. Robertson, Christo Wilson. (2024). Perceptions in Pixels: Analyzing Perceived Gender and Skin Tone in Real-world Image Search Results WWW, 1249-1259. https://doi.org/10.1145/3589334.3645666 -

Hammurabi: A Framework for Pluggable, Logic-based X.509 Certificate Validation Policies

Citation: James Larisch, Waqar Aqeel, Michael Lum, Yaelle Goldschlag, Kasra Torshizi, Leah Kannan, Yujie Wang, Taejoong Chung, Dave Levin, Bruce M. Maggs, Alan Mislove, Bryan Parno, and Christo Wilson. (2022). "Hammurabi: A Framework for Pluggable, Logic-Based X.509 Certificate Validation Policies". In Proceedings of the 2022 ACM SIGSAC Conference on Computer and Communications Security (CCS ’22), November 7–11, 2022, Los Angeles, CA, USA. ACM, New York, NY, USA, 15 pages. DOI: 10.1145/3548606.3560594 -

Setting the Bar Low: Are Websites Complying With the Minimum Requirements of the CCPA?

Citation: Maggie Van Nortwick and Christo Wilson. "Setting the Bar Low: Are Websites Complying With the Minimum Requirements of the CCPA?". Proceedings on Privacy Enhancing Technologies (PoPETS), 2022(1), January, 2022. -

A Comparative Study of Dark Patterns Across Mobile and Web Modalities

Citation: Johanna Gunawan, Amogh Pradeep, David Choffnes, Woodrow Hartzog, and Christo Wilson. "A Comparative Study of Dark Patterns Across Mobile and Web Modalities". Proceedings of the ACM: Human-Computer Interaction, 5(CSCW2), October, 2021. DOI: 10.1145/3479521 -

When Fair Ranking Meets Uncertain Inference

Citation: Avijit Ghosh, Ritam Dutt, and Christo Wilson. "When Fair Ranking Meets Uncertain Inference". In Proceedings of the ACM SIGIR Conference on Research and Development in Information Retrieval (SIGIR 2021). Virtual Event, Canada, July, 2021. DOI: 10.1145/3506803 -

Building and Auditing Fair Algorithms: A Case Study in Candidate Screening

Citation: Christo Wilson, Avijit Ghosh, Shan Jiang, Alan Mislove, Lewis Baker, Janelle Szary, Kelly Trindel, and Frida Polli. "Building and Auditing Fair Algorithms: A Case Study in Candidate Screening." In Proceedings of the Conference on Fairness, Accountability, and Transparency (FAccT 2021). Virtual Event, Canada, March, 2021. DOI: 10.1145/3442188.3445928 -

Structurizing Misinformation Stories via Rationalizing Fact-Checks

Citation: Shan Jiang , Christo Wilson. (2021). Structurizing Misinformation Stories via Rationalizing Fact-Checks ACL/IJCNLP (1), 617-631. https://doi.org/10.18653/v1/2021.acl-long.51 -

Modeling and Measuring Expressed (Dis)belief in (Mis)information

Citation: Shan Jiang, Miriam Metzger, Andrew Flanagin, and Christo Wilson. "Modeling and Measuring Expressed (Dis)belief in (Mis)information". In Proceedings of the International AAAI Conference on Weblogs and Social Media (ICWSM 2020). Atlanta, Georgia, June, 2020. -

A Longitudinal Analysis of the ads.txt Standard

Citation: Muhammad Ahmad Bashir, Sajjad Arshad, Engin Kirda, William K. Robertson, Christo Wilson. (2019). A Longitudinal Analysis of the ads.txt Standard Internet Measurement Conference, 294-307. https://doi.org/10.1145/3355369.3355603 -

Auditing the Personalization and Composition of Politically-Related Search Engine Results Pages

Citation: Ronald E. Robertson, David Lazer, and Christo Wilson. 2018. Auditing the Personalization and Composition of Politically-Related Search Engine Results Pages. In Proceedings of the 2018 World Wide Web Conference (WWW '18). International World Wide Web Conferences Steering Committee, Republic and Canton of Geneva, CHE, 955–965. DOI: 10.1145/3178876.3186143