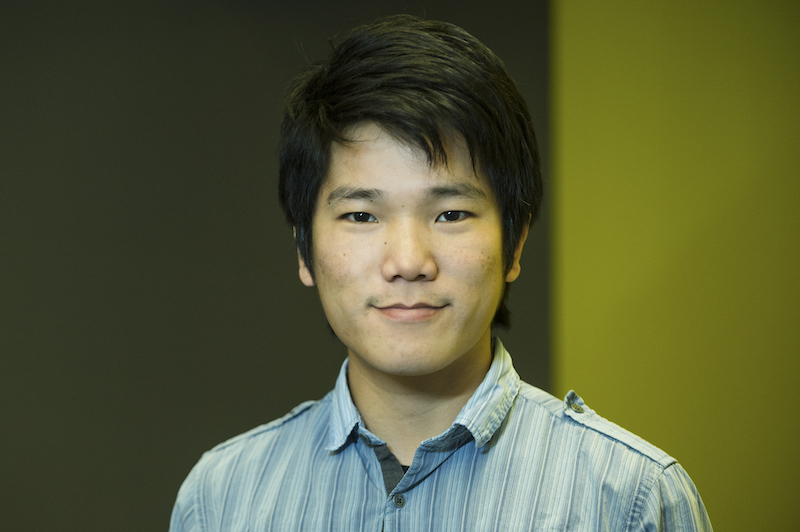

Gene Cooperman

(he/him/his)

Professor

Research interests

- Fault tolerance and transparent checkpointing

- Supercomputing, parallel computing, cloud computing

- Formal verification

- Cybersecurity

Education

- PhD in Applied Mathematics, Brown University

- BS in Mathematics and Physics, University of Michigan

Biography

Gene Cooperman is a professor in the Khoury College of Computer Sciences at Northeastern University, based in Boston. He is an affiliated faculty member in the College of Engineering.

Cooperman has worked in a number of interdisciplinary research areas, including applied mathematics, computational and symbolic algebra, numerical analysis, computing in high energy physics, bioinformatics, high-performance computing, and computer systems. He leads Northeastern's High Performance Computing Laboratory, as well as an Inria associate team in a three-year project called "FogRein: Steering Efficiency for Distributed Applications." He has co-authored more than 100 refereed publications, advised doctoral students, and led several open-source software projects,.

Before joining Northeastern, Cooperman was a principal member of technical staff at GTE Laboratories from 1980 to 1986. He also held a five-year IDEX Chair of Attractivity position at the University of Toulouse in France, as well as visiting research positions at Concordia University, CERN, and Inria. As a result of his work at CERN, Cooperman joined the Geant4 Collaboration and contributed to the foundational paper "GEANT4 - A Simulation Toolkit" — the most widely cited paper in high-energy physics, with more than 25,000 citations.

Recent publications

-

HotSwap: Enabling Live Dependency Sharing in Serverless Computing

Citation: Rui Li, Devesh Tiwari, Gene Cooperman. (2025). HotSwap: Enabling Live Dependency Sharing in Serverless Computing CLOUD, 152-162. https://doi.org/10.1109/CLOUD67622.2025.00025 -

BootSeer: Analyzing and Mitigating Initialization Bottlenecks in Large-Scale LLM Training

Citation: Rui Li, Xiaoyun Zhi, Jinxin Chi, Menghan Yu, Lixin Huang, Jia Zhu, Weilun Zhang, Xing Ma, Wenjia Liu, Zhicheng Zhu, Daowen Luo, Zuquan Song, Xin Yin, Chao Xiang, Shuguang Wang, Wencong Xiao, Gene Cooperman. (2025). BootSeer: Analyzing and Mitigating Initialization Bottlenecks in Large-Scale LLM Training CoRR, abs/2507.12619. https://doi.org/10.48550/arXiv.2507.12619 -

The Case for ABI Interoperability in a Fault Tolerant MPI

Citation: Yao Xu, Grace Nansamba, Anthony Skjellum, Gene Cooperman. (2025). The Case for ABI Interoperability in a Fault Tolerant MPI CoRR, abs/2503.11138. https://doi.org/10.48550/arXiv.2503.11138 -

Enabling Practical Transparent Checkpointing for MPI: A Topological Sort Approach

Citation: Yao Xu, Gene Cooperman. (2024). Enabling Practical Transparent Checkpointing for MPI: A Topological Sort Approach CoRR, abs/2408.02218. https://doi.org/10.48550/arXiv.2408.02218 -

Implementation-Oblivious Transparent Checkpoint-Restart for MPI

Citation: Yao Xu, Leonid Belyaev, Twinkle Jain, Derek Schafer, Anthony Skjellum, Gene Cooperman. (2023). Implementation-Oblivious Transparent Checkpoint-Restart for MPI CoRR, abs/2309.14996. https://doi.org/10.48550/arXiv.2309.14996 -

Collective Vector Clocks: Low-Overhead Transparent Checkpointing for MPI

Citation: Yao Xu, Gene Cooperman. (2022). Collective Vector Clocks: Low-Overhead Transparent Checkpointing for MPI CoRR, abs/2212.05701. https://doi.org/10.48550/arXiv.2212.05701 -

McMini: A Programmable DPOR-based Model Checker for Multithreaded Programs

Citation: Maxwell Pirtle, Luka Jovanovic, Gene Cooperman. (2022). McMini: A Programmable DPOR-based Model Checker for Multithreaded Programs CoRR, abs/2212.05468. https://doi.org/10.48550/arXiv.2212.05468 -

CRAC: Checkpoint-Restart Architecture for CUDA with Streams and UVM

Citation: Twinkle Jain and Gene Cooperman, Proceedings of the International Conference for High Performance Computing, Networking, Storage and Analysis (SC'20), pp. 1083–1097, Nov., 2020, IEEE Computer Society -

MANA for MPI: MPI-Agnostic Network-Agnostic Transparent Checkpointing

Citation: "MANA for MPI: MPI-Agnostic Network-Agnostic Transparent Checkpointing", Rohan Garg, Gregory Price, and Gene Cooperman, Proc. of 28th Int. Symp. on High Performance Parallel and Distributed Computing, Phoenix, AZ, USA, ACM, pp. 49--60, June, 2019 -

CRUM: Checkpoint-Restart Support for CUDA’s Unified Memory

Citation: "CRUM: Checkpoint-Restart Support for CUDA's Unified Memory", Rohan Garg, Apoorve Mohan, Michael Sullivan, Gene Cooperman, Proc. of IEEE Int. Conf. on Cluster Computing</em> (Cluster'18), pp. 302--313, 2018 -

Functional Classification of Protein Structures by Local Structure Matching in Graph Representation

Citation: "Functional Classification of Protein Structures by Local Structure Matching in Graph Representation", Caitlyn L. Mills, Rohan Garg, Joslynn S. Lee, Liang Tian, Alexandru Suciu, Gene Cooperman, Penny J. Beuning, Mary Jo Ondrechen, Protein Science 27(6), pp. 1125--1135, 2018, -

Transparently Checkpointing Software Test Benches to Improve Productivity of SoC Verification in an Emulation Environment

Citation: "Transparently Checkpointing Software Test Benches to Improve Productivity of SoC Verification in an Emulation Environment", Ankit Garg, Suresh Krishnamurthy, Gene Cooperman, Rohan Garg, and Jeff Evans, <em>2018 Design and Verification Conference and Exhibition</em> (DVCON-US 2018) -

Recent Developments in Geant4

Citation: "Recent Developments in Geant4", J. Allison et al. (99 co-authors in total, including G. Cooperman), <em>Nuclear Instruments and Methods in Physics Research Section A: Accelerators, Spectrometers, Detectors and Associated Equipment</em> <b>835</b>, pp. 186--225, Nov 1, 2016 -

Transparent Checkpoint-Restart over InfiniBand

Citation: "Transparent Checkpoint-Restart over InfiniBand", Jiajun Cao, Gregory Kerr, Kapil Arya and Gene Cooperman, ACM Symposium on High Performance Parallel and Distributed Computing (HPDC'14), pp. 13--24, ACM Press, 2014.