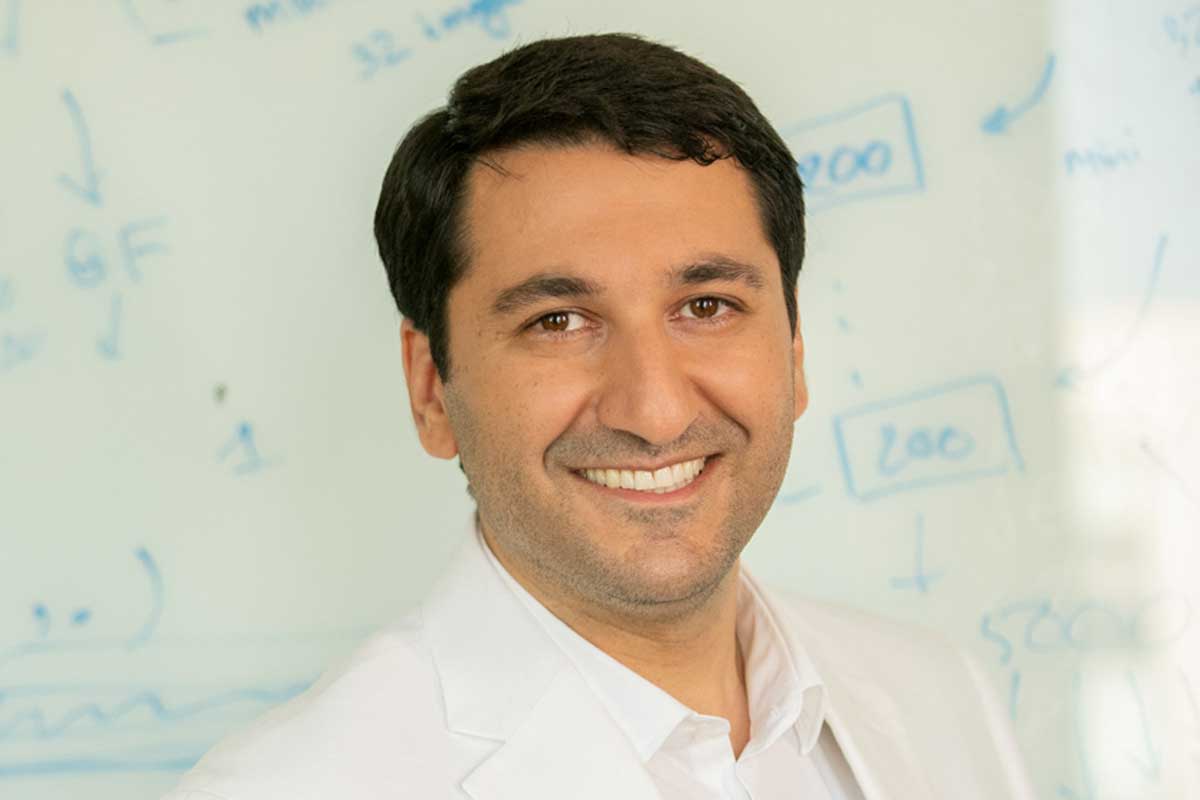

Ehsan Elhamifar

Associate Professor, Affiliate Faculty with the College of Engineering

Research interests

- Artificial intelligence

- Computer vision

- Machine learning

Education

- PhD in Electrical and Computer Engineering, Johns Hopkins University

- MS in Engineering, Johns Hopkins University

- MS in Electrical Engineering, Sharif University of Technology — Iran

- BS in Biomedical Engineering, Amirkabir University of Technology — Iran

Biography

Ehsan Elhamifar is an associate professor in the Khoury College of Computer Sciences at Northeastern University, based in Boston. He is affiliated with the College of Engineering.

Elhamifar develops AI that understands and learns from complex human activities and scenes using videos and multi-modal data, learns its tasks from fewer examples and less annotated data, and makes real-time inferences as new data arrives. He also combines these AI systems with AR and VR technologies to aid people in performing complex procedural and physical tasks. In so doing, he pulls from a litany of concepts and disciplines, including long-form and egocentric video understanding, action segmentation, procedure and low-shot learning, fine-grained and multi-label recognition, video summarization, sequence alignment, adversarial attacks, subset selection, manifold clustering, trajectory prediction, sparse and low-rank recovery, and submodular maximization.

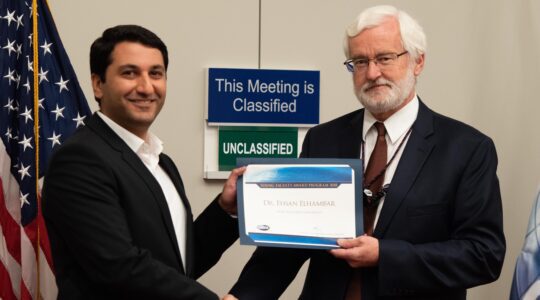

Elhamifar is the director of the Mathematical Data Science (MCADS) Lab, which focuses on computer vision, machine learning, and AI. He is also a former postdoctoral scholar at UC Berkeley and a recipient of the DARPA Young Faculty Award.

Recent publications

-

Error Recognition in Procedural Videos Using Generalized Task Graph

Citation: Shih-Po Lee, Ehsan Elhamifar. (2025). Error Recognition in Procedural Videos Using Generalized Task Graph ICCV, 10009-10021. https://doi.org/10.1109/ICCV51701.2025.00933 -

Compositional Targeted Multi-Label Universal Perturbations

Citation: Hassan Mahmood, Ehsan Elhamifar. (2025). Compositional Targeted Multi-Label Universal Perturbations CVPR, 20580-20591. https://openaccess.thecvf.com/content/CVPR2025/html/Mahmood_Compositional_Targeted_Multi-Label_Universal_Perturbations_CVPR_2025_paper.html -

DeCafNet: Delegate and Conquer for Efficient Temporal Grounding in Long Videos

Citation: Zijia Lu, A S. M. Iftekhar, Gaurav Mittal, Tianjian Meng, Xiawei Wang, Cheng Zhao, Rohith Kukkala, Ehsan Elhamifar, Mei Chen. (2025). DeCafNet: Delegate and Conquer for Efficient Temporal Grounding in Long Videos CVPR, 24066-24076. https://openaccess.thecvf.com/content/CVPR2025/html/Lu_DeCafNet_Delegate_and_Conquer_for_Efficient_Temporal_Grounding_in_Long_CVPR_2025_paper.html -

Understanding Multi-Task Activities from Single-Task Videos

Citation: Yuhan Shen, Ehsan Elhamifar. (2025). Understanding Multi-Task Activities from Single-Task Videos CVPR, 19120-19131. https://openaccess.thecvf.com/content/CVPR2025/html/Shen_Understanding_Multi-Task_Activities_from_Single-Task_Videos_CVPR_2025_paper.html -

Error Detection in Egocentric Procedural Task Videos

Citation: Shih-Po Lee, Zijia Lu, Zekun Zhang, Minh Hoai, Ehsan Elhamifar. (2024). Error Detection in Egocentric Procedural Task Videos CVPR, 18655-18666. https://doi.org/10.1109/CVPR52733.2024.01765 -

FACT: Frame-Action Cross-Attention Temporal Modeling for Efficient Action Segmentation

Citation: Zijia Lu, Ehsan Elhamifar. (2024). FACT: Frame-Action Cross-Attention Temporal Modeling for Efficient Action Segmentation CVPR, 18175-18185. https://doi.org/10.1109/CVPR52733.2024.01721 -

Learning to Segment Referred Objects from Narrated Egocentric Videos

Citation: Yuhan Shen, Huiyu Wang, Xitong Yang, Matt Feiszli, Ehsan Elhamifar, Lorenzo Torresani, Effrosyni Mavroudi. (2024). Learning to Segment Referred Objects from Narrated Egocentric Videos CVPR, 14510-14520. https://doi.org/10.1109/CVPR52733.2024.01375 -

Progress-Aware Online Action Segmentation for Egocentric Procedural Task Videos

Citation: Yuhan Shen, Ehsan Elhamifar. (2024). Progress-Aware Online Action Segmentation for Egocentric Procedural Task Videos CVPR, 18186-18197. https://doi.org/10.1109/CVPR52733.2024.01722 -

Zero-Shot Attribute Attacks on Fine-Grained Recognition Models

Citation: Nasim Shafiee, Ehsan Elhamifar. (2022). Zero-Shot Attribute Attacks on Fine-Grained Recognition Models ECCV (5), 262-282. https://doi.org/10.1007/978-3-031-20065-6_16 -

Set-Supervised Action Learning in Procedural Task Videos via Pairwise Order Consistency

Citation: Zijia Lu, Ehsan Elhamifar. (2022). Set-Supervised Action Learning in Procedural Task Videos via Pairwise Order Consistency CVPR, 19871-19881. https://doi.org/10.1109/CVPR52688.2022.01928 -

Semi-Weakly-Supervised Learning of Complex Actions from Instructional Task Videos

Citation: Yuhan Shen, Ehsan Elhamifar. (2022). Semi-Weakly-Supervised Learning of Complex Actions from Instructional Task Videos CVPR, 3334-3344. https://doi.org/10.1109/CVPR52688.2022.00334 -

Open-Vocabulary Instance Segmentation via Robust Cross-Modal Pseudo-Labeling

Citation: Dat Huynh, Jason Kuen, Zhe Lin, Jiuxiang Gu, Ehsan Elhamifar. (2022). Open-Vocabulary Instance Segmentation via Robust Cross-Modal Pseudo-Labeling CVPR, 7010-7021. https://doi.org/10.1109/CVPR52688.2022.00689 -

Deep Supervised Summarization: Algorithm and Application to Learning Instructions

Citation: Deep Supervised Summarization: Algorithm and Application to Learning Instructions C. Xu and E. Elhamifar, Neural Information Processing Systems (NeurIPS), 2019. -

Unsupervised Procedure Learning via Joint Dynamic Summarization

Citation: Unsupervised Procedure Learning via Joint Dynamic Summarization. E. Elhamifar and Z. Naing, International Conference on Computer Vision (ICCV), 2019. -

Facility Location: Approximate Submodularity and Greedy Algorithm

Citation: Facility Location: Approximate Submodularity and Greedy Algorithm, E. Elhamifar, International Conference on Machine Learning (ICML), 2019. -

Subset Selection and Summarization in Sequential Data

Citation: E. Elhamifar and M. C. De Paolis Kaluza; Neural Information Processing Systems (NIPS), 2017. -

Online Summarization via Submodular and Convex Optimization

Citation: E. Elhamifar and M. C. De Paolis Kaluza IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2017. -

Sparse Hidden Markov Models for Surgical Gesture Classification and Skill Evaluation

Citation: Sparse Hidden Markov Models for Surgical Gesture Classification and Skill Evaluation, L. Tao, E. Elhamifar, S. Khudanpur, G. Hager, and R. Vidal, Information Processing in Computer Assisted Interventions (IPCAI), 2012.