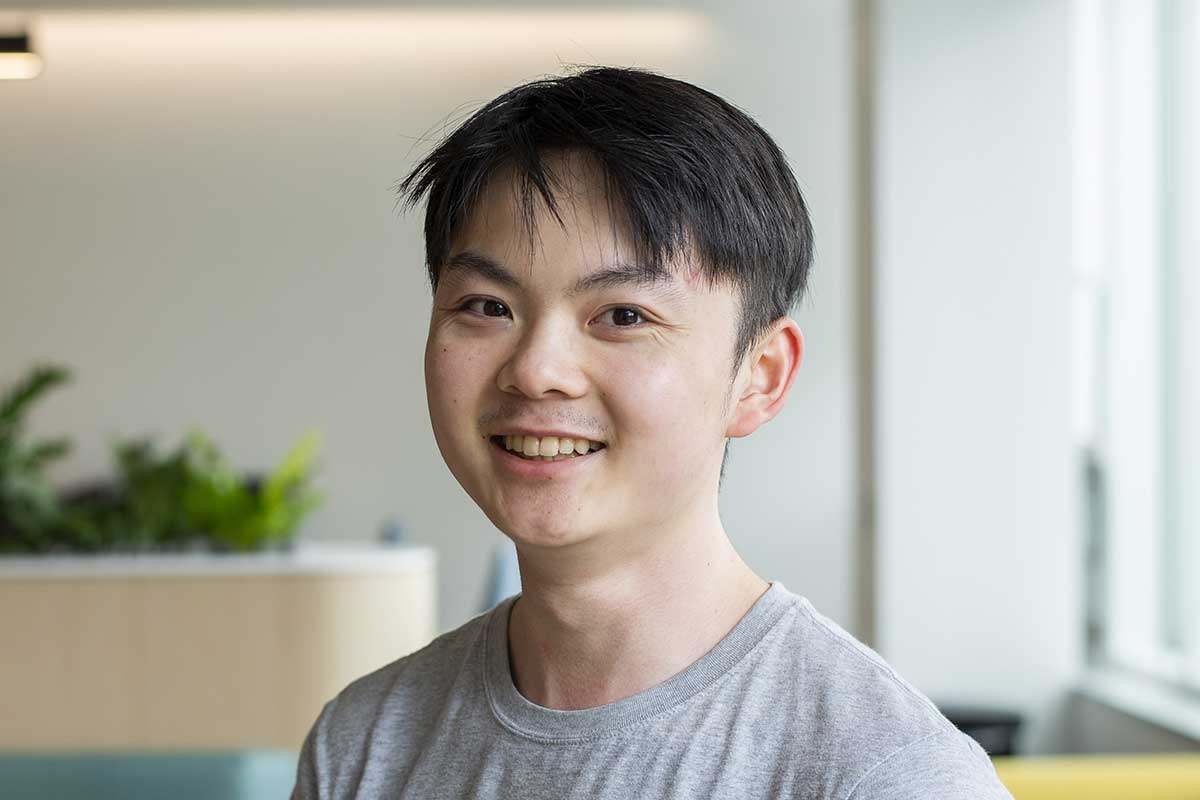

Christopher Amato

(he/him/his)

Associate Professor

Research interests

- Artificial intelligence

- Machine learning

- Robotics

Education

- PhD in Computer Science, University of Massachusetts, Amherst

- MS in Computer Science, University of Massachusetts, Amherst

- BA in Clinical Psychology and Philosophy, Tufts University

Biography

Christopher Amato is an associate professor in the Khoury College of Computer Sciences at Northeastern University, based in Boston.

Amato's research lies at the intersection of artificial intelligence, machine learning, and robotics. He heads the Lab for Learning and Planning in Robotics, where he and his team work on planning and reinforcement learning in partially observable and multi-agent/multi-robot systems. Amato has published widely in leading AI, machine learning, and robotics conferences, receiving a best paper prize at AAMAS-14 and best paper nominations at RSS-15, AAAI-19, and AAMAS-21. He has also co-organized several tutorials on multi-agent planning and learning, and has co-authored a book on the subject.

Before joining Northeastern, Amato was a research scientist at Aptima Inc., a research scientist and postdoctoral fellow at MIT, and an assistant professor at the University of New Hampshire.

Labs and groups

Recent publications

-

LLM Collaboration with Multi-Agent Reinforcement Learning

Citation: Shuo Liu, Zeyu Liang, Xueguang Lyu, Christopher Amato. (2026). LLM Collaboration with Multi-Agent Reinforcement Learning AAAI, 32150-32158. https://doi.org/10.1609/aaai.v40i38.40487 -

Adversarial Inception Backdoor Attacks against Reinforcement Learning

Citation: Ethan Rathbun, Alina Oprea, Christopher Amato. (2025). Adversarial Inception Backdoor Attacks against Reinforcement Learning ICML. https://openreview.net/forum?id=yTsbZQTsAu -

SleeperNets: Universal Backdoor Poisoning Attacks Against Reinforcement Learning Agents

Citation: Ethan Rathbun, Christopher Amato, Alina Oprea. (2024). SleeperNets: Universal Backdoor Poisoning Attacks Against Reinforcement Learning Agents NeurIPS. http://papers.nips.cc/paper_files/paper/2024/hash/cb03b5108f1c3a38c990ef0b45bc8b31-Abstract-Conference.html -

Shield Decomposition for Safe Reinforcement Learning in General Partially Observable Multi-Agent Environments

Citation: Daniel Melcer, Christopher Amato, Stavros Tripakis. (2024). Shield Decomposition for Safe Reinforcement Learning in General Partially Observable Multi-Agent Environments RLJ, 4, 1965-1994. https://rlj.cs.umass.edu/2024/papers/Paper254.html -

Robot Navigation in Unseen Environments using Coarse Maps

Citation: Chengguang Xu, Christopher Amato, Lawson L. S. Wong. (2024). Robot Navigation in Unseen Environments using Coarse Maps ICRA, 2932-2938. https://doi.org/10.1109/ICRA57147.2024.10611256 -

On-Robot Bayesian Reinforcement Learning for POMDPs

Citation: Hai Nguyen, Sammie Katt, Yuchen Xiao, Christopher Amato. (2023). On-Robot Bayesian Reinforcement Learning for POMDPs IROS, 9480-9487. https://doi.org/10.1109/IROS55552.2023.10342114 -

Trajectory-Aware Eligibility Traces for Off-Policy Reinforcement Learning

Citation: Brett Daley, Martha White, Christopher Amato, Marlos C. Machado. (2023). Trajectory-Aware Eligibility Traces for Off-Policy Reinforcement Learning ICML, 6818-6835. https://proceedings.mlr.press/v202/daley23a.html -

Improving Deep Policy Gradients with Value Function Search

Citation: Enrico Marchesini, Christopher Amato. (2023). Improving Deep Policy Gradients with Value Function Search ICLR. https://openreview.net/pdf?id=6qZC7pfenQm -

A Deeper Understanding of State-Based Critics in Multi-Agent Reinforcement Learning

Citation: Xueguang Lyu, Andrea Baisero, Yuchen Xiao, Christopher Amato. (2022). A Deeper Understanding of State-Based Critics in Multi-Agent Reinforcement Learning AAAI, 9396-9404. https://ojs.aaai.org/index.php/AAAI/article/view/21171 -

Reconciling Rewards with Predictive State Representations

Citation: Andrea Baisero, Christopher Amato. (2021). Reconciling Rewards with Predictive State Representations IJCAI, 2170-2176. https://doi.org/10.24963/ijcai.2021/299 -

To Ask or Not to Ask: A User Annoyance Aware Preference Elicitation Framework for Social Robots

Citation: Balint Gucsi, Danesh S. Tarapore, William Yeoh , Christopher Amato, Long Tran-Thanh. (2020). To Ask or Not to Ask: A User Annoyance Aware Preference Elicitation Framework for Social Robots IROS, 7935-7940. https://doi.org/10.1109/IROS45743.2020.9341607 -

Learning Multi-Robot Decentralized Macro-Action-Based Policies via a Centralized Q-Net

Citation: Xiao, Yuchen & Hoffman, Joshua & Xia, Tian & Amato, Christopher. (2020). Learning Multi-Robot Decentralized Macro-Action-Based Policies via a Centralized Q-Net. -

Decision-Making Under Uncertainty in Multi-Agent and Multi-Robot Systems: Planning and Learning

Citation: Christopher Amato. In the Proceedings of the Twenty-Seventh International Joint Conference on Artificial Intelligence (IJCAI-18), July 2018 -

Near-Optimal Adversarial Policy Switching for Decentralized Asynchronous Multi-Agent Systems

Citation: Nghia Hoang, Yuchen Xiao, Kavinayan Sivakumar, Christopher Amato and Jonathan P. How. In the Proceedings of the 2018 IEEE International Conference on Robotics and Automation (ICRA-18), May 2018. -

COG-DICE: An Algorithm for Solving Continuous-Observation Dec-POMDPs

Citation: COG-DICE: An Algorithm for Solving Continuous-Observation Dec-POMDPs. Madison Clark-Turner and Christopher Amato. In the Proceedings of the Twenty-Sixth International Joint Conference on Artificial Intelligence (IJCAI-17), August 2017